The library, by conversation.

Sacred Grove is an immersive reading app for scripture and wisdom literature. The first version is iOS, built in SwiftUI, with a bundled corpus of public-domain translations and a design system carried in code. It is the project where I am testing what AI-first design actually means when the brief is “build the whole thing, not just a screen.”

This entry is a planting note. The full case study will come once the rooms are furnished. For now, here is how the project is being made.

Conversation as the first artifact

The starting point was not a sketch. It was a conversation.

Most projects begin with a moodboard, a wireframe, or a brief. Sacred Grove began in a chat window, working with an LLM agent on what a serious long-form reader should feel like in 2026. The output was a document, then another document, then a small library of working memos: a product thesis, a content model, a design principles list. Each one came out of an exchange where I pushed on the model and the model pushed back.

The discipline I keep relearning is that a chat is not a deliverable. The deliverable is a written artifact you can hand to the next collaborator, the next agent, or the next version of yourself. I now treat the LLM session as an interview that produces specifications, not a brainstorm that produces vibes.

Collecting the corpus

Before any UI, the question was what the app reads.

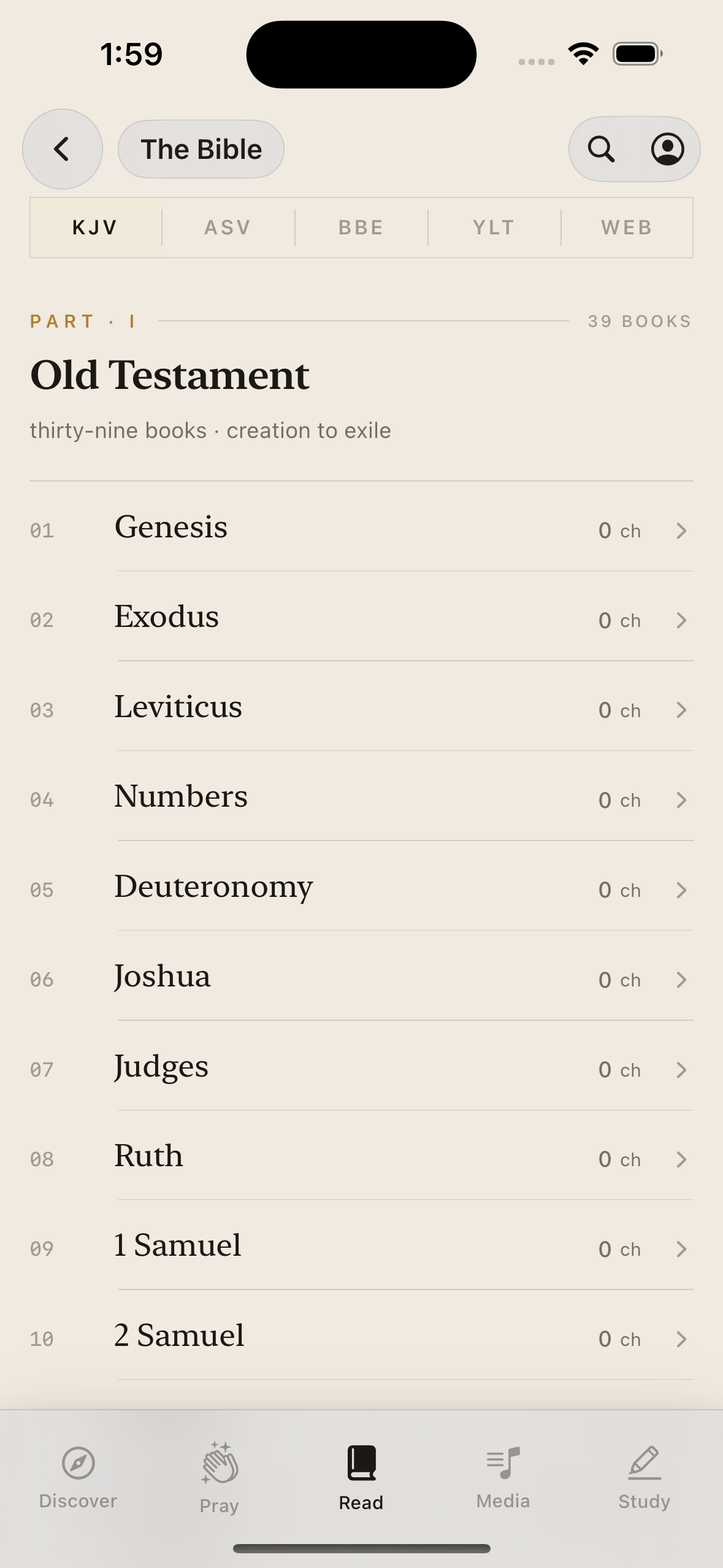

The answer is open content. The bundled database ships with four public-domain English Bibles: KJV, ASV, BBE, and YLT. The first imported addition is the World English Bible, fetched from eBible.org and parsed out of USFX into a per-translation table with an FTS5 search index. The next shelves are Meditations by Marcus Aurelius and the Dhammapada, both clean public-domain texts from Project Gutenberg, normalized into the same shape as the scripture tables.

The agent did the unglamorous middle. It wrote the import script, picked the schema, mapped the book ordering, set up the full-text search virtual table, and produced a rerunnable command. My job was to decide which sources to pull, what license posture to take, and how strict to be about provenance metadata. That split is where AI-first work earns its keep: the model handles the grammar of the import, I make the editorial calls.

Analysis: finding the unit of the app

The biggest design decision in Sacred Grove came out of an analysis pass, not a Figma session.

I had a Bible reader with comparative overlays. I wanted a multi-tradition research corpus. The model and I walked through the existing code and listed every place the UI was assuming “verse.” Selection was anchored to a VerseRecord. Share cards took verse text and a reference. Compare was organized around curated Bible pairs. Music attached to chapters through Bible-only metadata.

The conclusion was that the canonical interaction object had to change. The unit of the app is not a verse. It is a PassageSelection: a pointer into any work, in any tradition, at any granularity. Copy, compare, share, annotate, link to music, link to art, every interaction builds on top of it.

That is a refactor that touches almost every screen. It is also the kind of insight that is hard to land in a meeting and easy to land in a conversation where you can ask the model to enumerate every coupling in the codebase before you commit.

Design: stable shell, adaptive atmosphere

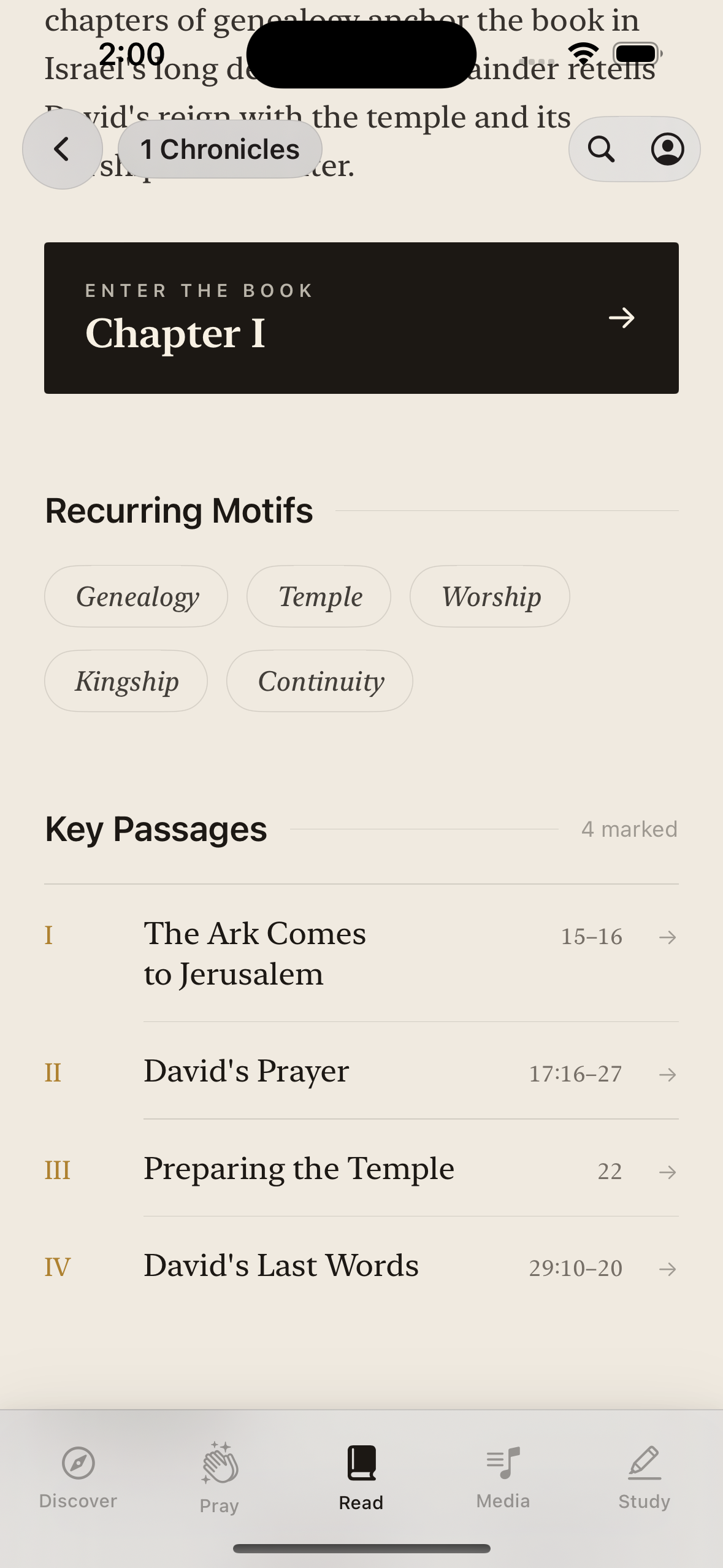

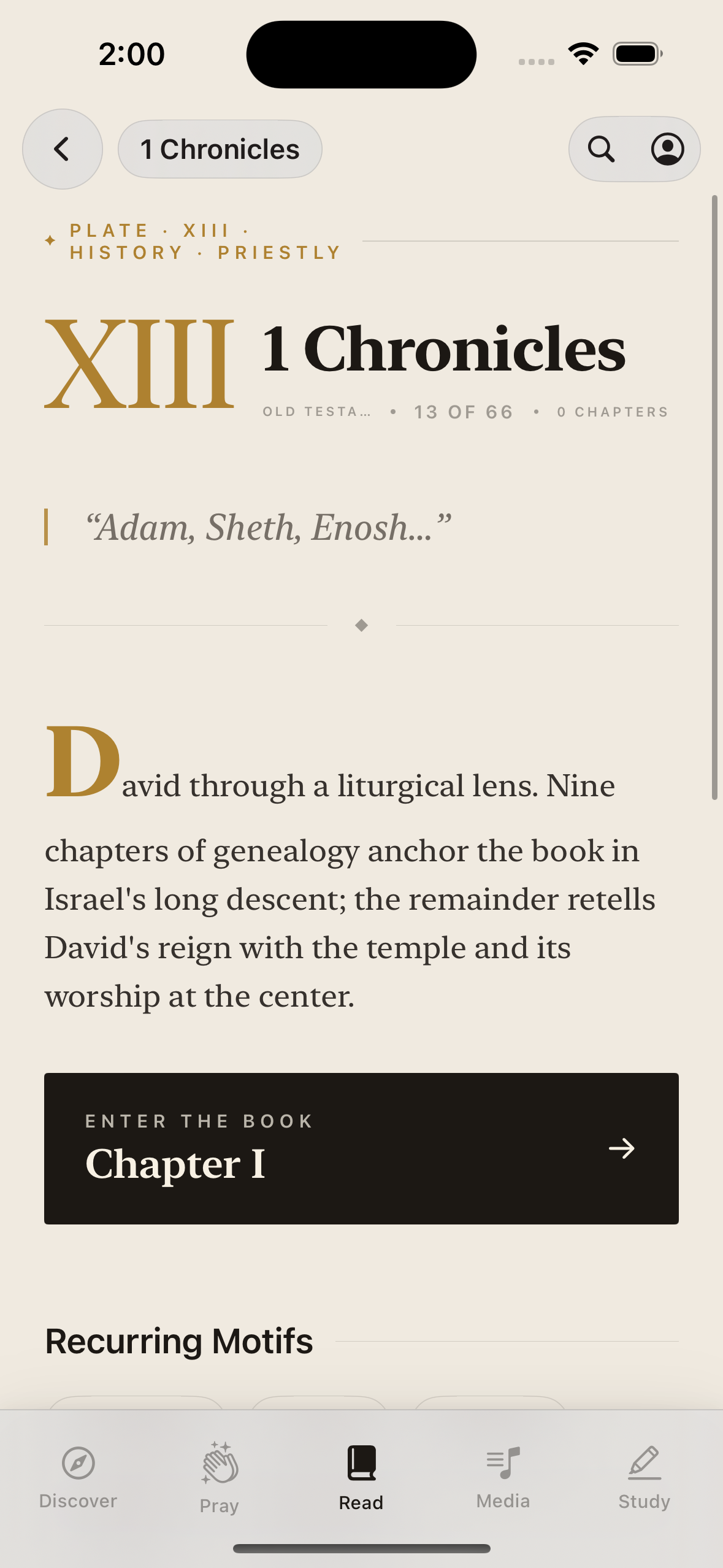

The product thesis is that the app should feel like moving through the greatest reading rooms, archives, and listening chambers in history, without literal page turns or fake bookshelves.

The principles, written before any screen:

- The shell is stable. Navigation, dock, header structure, spacing rhythm, motion timing. These do not change between a Psalm and a stanza of the Tao Te Ching.

- The atmosphere adapts. Background tones, hero imagery, accent metals, material density, media overlays, share-card styling. These shift with the work.

- Read, listen, compare, save, and quote are peer actions. None of them is a secondary utility tucked behind an overflow menu.

The design system is a Swift file, not a Figma library. Color, type, spacing, motion, and component shapes live in DesignSystem.swift next to the views that use them. Figma is downstream of the code, not upstream.

Development: SwiftUI as the rendering layer

The app is a SwiftUI project in Xcode. SQLite is the runtime store, with FTS for search and structured tables for works, versions, sections, and segments. SwiftData holds user state: notes, highlights, bookmarks, research links. Audio is a first-class peer of text, with a mini player and a now-playing surface modeled after a music app rather than an ebook reader.

The agentic workflow runs all the way through implementation. I write the brief in plain English, the model produces a Swift file, I read it the way I would read a junior engineer’s pull request, I push back on the choices that are wrong, and we converge. The loop is fast enough that the bottleneck is taste, not typing.

Why this is a planting note

A case study explains a finished thing. Sacred Grove is not finished. Right now it is a thesis, a corpus, a design system, and a working iOS build. I am writing this entry to mark the moment the workflow itself became the subject worth documenting.

The full case study will cover the screens, the typography, the audio model, the share cards, the multi-tradition expansion, and the question every AI-first project eventually has to answer: what does the human do.